Building with AI at Upvest

How we are adopting AI, what we have learned, and what we are still figuring out.

Upvest is embedding AI across the organisation, with eighty percent of features being delivered with AI assistance, and tools sitting inside almost every workflow. Three decisions made the most difference: making the best agentic tools available to everyone, giving every employee a €20,000 annual token budget, and building shared infrastructure in the Upvest AI Toolkit and MCP Gateway to make adoption easy and secure. The result is that engineers now operate as leads of their own agent teams, shipping more efficiently. Producing code is not where we spend most of our time; understanding what our clients need, deciding what to build, validating correctness, and proving it is safe to ship are where the cost and judgement now sit, and that is where Upvest is investing next.

AI has been part of how we operate for the better part of a year. If you walk through Upvest today, you will notice that AI sits inside almost every workflow we run. Pull requests are being shipped with the help of agents. Product managers leverage Gemini to research and draft specs. Our Operations team automates workflows with n8n. Our Client Impact team handles client inquiries faster and builds standardised reporting and monitoring structures with NotebookLM. Eighty percent of all our features are now developed with the assistance of AI, and that number climbs every week.

Upvest did not arrive at AI fresh. Long before agents became headline news, our engineers were using GitHub Copilot every day, Incident.io was helping us detect and resolve incidents, and Datadog was flagging anomalies that humans would have missed. These tools normalised the idea that AI sits next to you while you work, and they earned trust over time. So when the next generation of agentic tools landed, our engineers did not need convincing that agents would be useful. They needed a way to use them well, at scale, on a regulated investment infrastructure codebase that does not forgive shortcuts.

In early March 2026, we added another tool to our belt: we made Claude available across the entire organisation. Our engineers love technology, and many were already experimenting with agentic tooling on their own. The role of management was to unblock them: lower the barrier to experimentation, put the best tools we could find in their hands, and trust them to do the rest. Around the same time, after our Series C Extension, we made another deliberate call: every Upvenger gets a €20,000 per year budget to spend on tokens. The engineering team's reaction was immediate enthusiasm, and the experimentation kicked in.

That budget was a statement. We wanted engineers to stop counting prompts, to try things, fail, retry and iterate without checking a meter. The cost of machine intelligence is becoming the cheapest thing in our value chain, and the real waste would be to throttle the people whose work translates directly into faster, more reliable outcomes for our clients.

In a matter of days, a small group of engineers and product managers became deeply fluent. They stopped using Claude as a smarter autocomplete and started using it as a teammate. They built workflows. They wrote agents to specialise in tasks they cared about. They debated in Slack about what made a good prompt, which model is better at each task, and how to share what they were learning. That was the beginning of the Engineering AI Guild, and they have effectively been the driving force for the rest of the organisation since.

Out of those weeks of experimentation came something we now rely on every day: the Upvest AI Toolkit. On the surface it is a curated collection of agents, rules and skills that any Upvenger can install with a single command. Underneath, it is something more important. It is the place where the company stores how we like to work.

The toolkit ships with thirty specialist agents covering the full lifecycle, from a Software Architect that helps plan a refactor to a Judge that arbitrates between conflicting reviewers, alongside thirty-six skills and forty rules that capture our conventions. Profiles let an engineer load the right knowledge set for the task at hand, whether that is data work, security review, or product engineering.

The toolkit is not finished, and it never will be. The point is not the artifact. The point is that one engineer's breakthrough on Tuesday becomes everyone's baseline by Friday. When someone figures out a better way to investigate an anomaly or to translate a regulatory requirement into acceptance criteria, they package it as a skill, others install it, and the bar rises for the whole organisation.

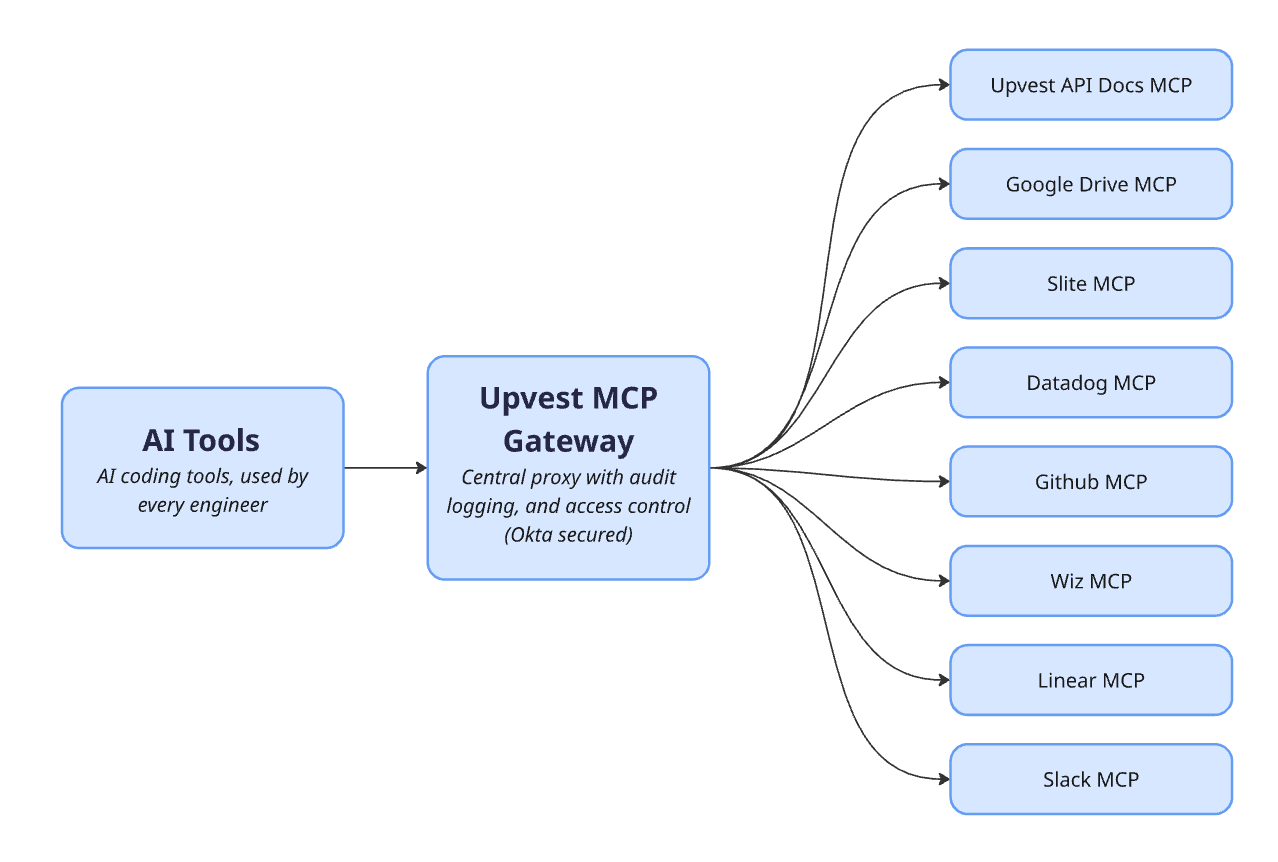

A model is only as powerful as the data it can reach. An agent that cannot see your Linear tickets, your Wiz findings, your Slite docs or your service code is at best an uninformed rubber-ducking partner. It will give you a clean answer based on nothing in particular.

That is why we built the Upvest MCP Gateway. It sits between our engineers' AI tools and the internal systems they care about. Linear, Wiz, GitHub, Datadog, Slite, our internal documentation and Cloudflare's own docs all flow through it. Authentication runs through Okta. Adding a new MCP server requires a security request, and that request triggers a risk assessment before anyone can wire it in.

The Gateway is not groundbreaking technology, but it’s a major unlocker. With it, a new joiner can connect to a dozen internal tools in one click, and tokens stay where they belong.

With agents in the loop, every engineer is effectively the team lead of their own small team, planning, delegating, reviewing and steering while specialised agents work in parallel on separate worktrees. The work used to be one feature at a time, with stretches of waiting on builds and feedback. Now an engineer's attention goes where judgement actually matters. Productivity and impact are up. The work one good engineer can drive in a sprint now looks more like what a small team would have shipped a year ago.

Even with the toolkit, the budget and the Gateway in place, adoption was uneven. Some teams flew while others stalled. People were learning by watching their power-user colleagues, and that was helpful, but it was not enough on its own.

So we ran a structured learning programme across the company. We started with an organisation-wide baseline session on what Claude is and is not, so every employee started from the same place. Then we ran department-specific Claude Cowork sessions for Finance, Strategy, Growth, Product, Legal, Compliance and Operations, each tuned to the work that team actually does. Finance modelled scenarios. Legal reviewed contracts. Operations automated checks. The brief to every team was the same. Bring something real to the workshop, and leave understanding how AI can automate how it gets done.

Beyond the structured training, we lean on people outside the company. We work with AI experts from around the globe, and our Board Non-Executive Directors (Alexander Matthey and Eric Sager) play a meaningful role in bringing best practices from other companies and sectors into how we think. We also encourage engineers to attend AI events, workshops and hands-on sessions, treating it as part of the job. Every engineer who attends is asked to share what they learned internally, or build a new skill into the toolkit.

One meta-learning sits underneath all of this. Organic adoption gets you started, but it does not get you to scale. The Engineering AI Guild gave us our first wave of velocity, the structured training brought everyone to a baseline, and external input keeps the bar moving. But maintaining a common way of working across a whole company needs an enablement function that owns it. Keeping people up to speed, curating the toolkit, evaluating new tools, and folding what the Guild discovers back into something every team can use, all of that needs a clear owner. This is one of the directions we are investing in next.

Our Security team has been at the centre of this work from the beginning, and has kept the company aware of the real risks of moving fast with new tools, while making sure those risks have practical, documented mitigations, from prompt injection and over-permissioned agents to clear guidance on what data can flow where.

AI is new. The industry is moving fast. Having a security team in the room from day one (as opposed to bolted on at the end) is what makes that manageable.

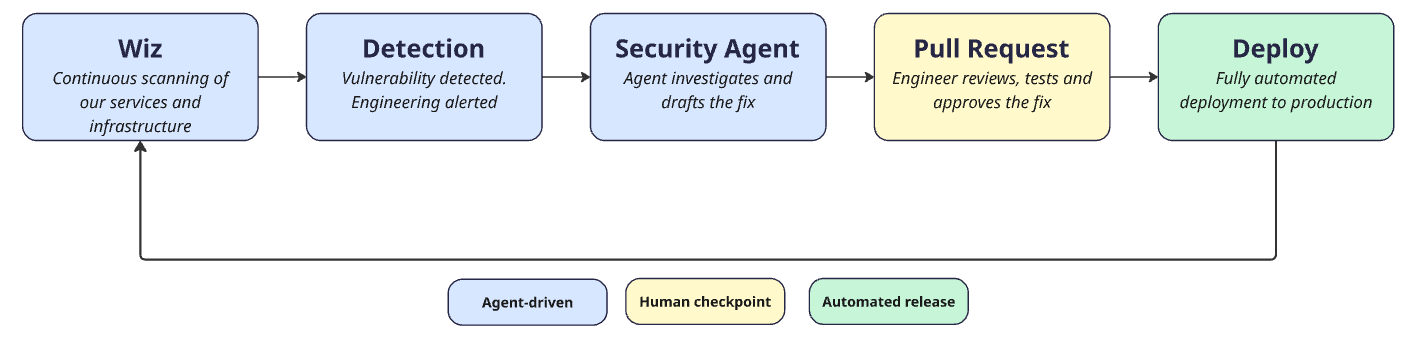

There is a sharper edge to this, too. Models keep crossing thresholds, with Anthropic's Mythos being the latest example, but the trend is broader. The speed at which vulnerabilities can be discovered and exploited is no longer capped by how fast someone can type. AI accelerates the loop on both sides of the firewall, and our defence has to keep up.

We have rolled out Wiz across our codebase and our cloud infrastructure for continuous vulnerability scanning. We are now experimenting with agentic vulnerability remediation in which the moment a finding surfaces, a Security Agent picks it up, investigates, drafts a fix and opens a pull request for an engineer to review, test, approve and release. The end goal is that classes of vulnerabilities that used to sit in a backlog for days should now close in hours. The human is still in the loop, but the loop now moves at a pace that matches the threat.

Being a regulated investment infrastructure company in Europe and the UK demands a clear set of AI guardrails, and we have done the groundwork to define them: how we evaluate vendors, where data can flow, how we audit what agents do, and where human approval stays mandatory. Compliance and AI value are not a trade-off for us, and the work we have done on these guardrails is what lets us use AI confidently in a regulated environment.

One example is data residency. Under GDPR, no PII can be stored outside the EU without recognised equivalent protection. We choose our AI tools with that constraint at the front of the conversation. Any tool that cannot guarantee this requirement, regardless of how brilliant it may be, does not get access to PII. Client and end-user data are our biggest assets, and the trust around it is not something we are willing to trade for speed.

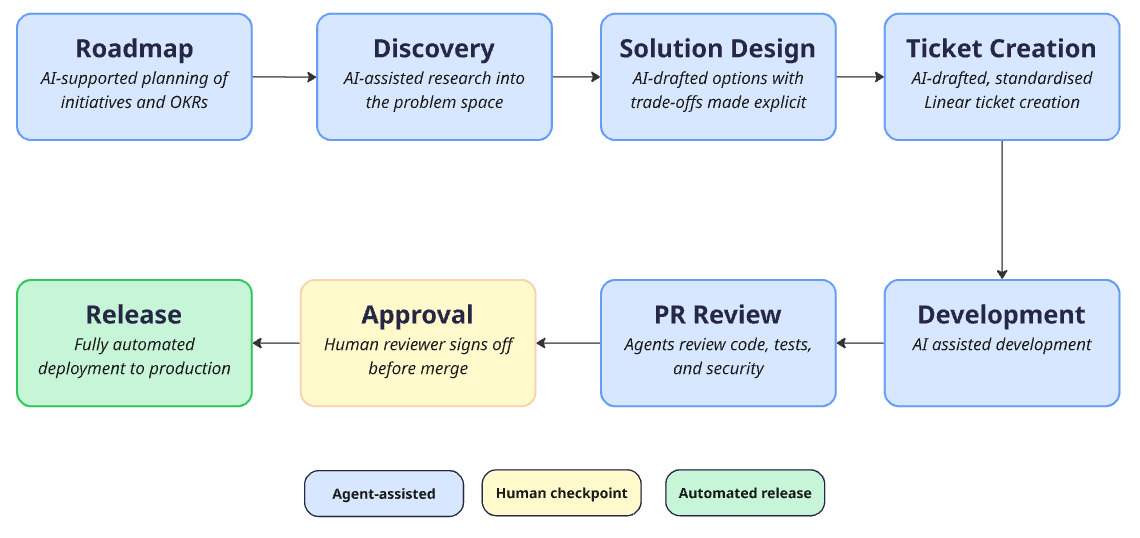

With AI in the loop, it became clear that producing code is not the most expensive part of our SSDLC. The shape of the lifecycle has evolved, and so has the location of the cost. The expensive parts are the ones that always mattered most: understanding the opportunity, aligning on what to build, and proving it is safe to ship.

Knowing where the bottleneck actually resides is harder than it sounds. To stay close to it, we partnered with GetDX. They give us continuous insight across the SSDLC into how our engineers work, what is helping them ship, and what is holding them back. That data has reshaped how we prioritise productivity and developer experience improvements.

For example: when eighty percent of our feature development carries an AI fingerprint, manual review becomes a chokepoint. The kinds of mistakes shift. Logic that looks plausible but skips a regulatory edge case. A test that asserts the same incorrect assumption as the implementation. A subtle pattern that does not match how the rest of the codebase handles the same problem. Reviewers are spending more time.

Our review process is changing in response. We have started experimenting with pull requests being reviewed by a panel of specialised agents before it gets to a human: a Security Engineer agent, a Performance agent, a Go Developer agent and a Platform Engineer agent. They check different things, and they check each other. The goal is to cover as much ground as possible and increase the confidence level in the final recommendations.

Every PR still ends with a human reviewer who reads the code, understands it, and approves it. That is non-negotiable. Our clients depend on the code we ship, and nothing reaches production at Upvest without a person standing behind it.

One lesson this work has reinforced is how much tests matter. AI is non-deterministic by nature, and a solid, deterministic test suite across the whole codebase is what gives us the confidence to ship anyway. Investing in test coverage and reliability has become even more important.

Production at a regulated investment infrastructure company is unforgiving. When something breaks, every minute matters for the clients depending on us, and the cost of a wrong move during an incident can be larger than the cost of the incident itself. We have leaned into AI here as well.

Our incident response is anchored on Incident.io, the same platform we have used for years, but the AI SRE inside it has reshaped what the first seconds of an incident look like. It is integrated with Linear, GitHub and Datadog and has visibility of work, code and our detailed system behaviour. When an alert fires, the AI SRE pulls in the most recent deployments, links the related Linear tickets, surfaces the diff and system performance, and starts forming a hypothesis on root cause before the on-call has finished reading the page. By the time a human is in the channel, the most likely root cause has been narrowed down, and a remediation path is often already proposed.

The on-call engineer still owns the call. The AI SRE does not roll back production by itself, and it does not page clients. But the time from alert to confident hypothesis has dropped meaningfully, and the post-incident document is much closer to publishable on the first pass. Human attention now goes to the parts that need judgement faster, as opposed to the parts that need information gathering.

We are still at the beginning. The compounding is impressive. The shape of the work is changing. The people on our team who lean in the most are getting the most leverage, and we want that leverage available to everyone.

A few things we are watching closely. Skill sprawl in the toolkit will need pruning. Spend per task is creeping up as agents take on longer-running work. We need to keep investing in our infrastructure, the gateways, the playbooks, and the test environments, because that is where the leverage compounds. And we need to keep thinking about how to sustain human understanding of systems that AI is now writing parts of.

A handful of decisions have made the most difference for us, and may help others starting from a similar place. Pick a default toolchain so people have somewhere to land. Give them a real budget, and the permission to use it. Treat security as a partner, not a permit office. Invest in the infrastructure that makes adoption easy on day one and sustainable on day three hundred.

If any of this resonates, Upvest is hiring. We are looking for great engineers who are excited about AI and the investment industry, and who want to help shape what comes next.

We are also specifically recruiting Applied AI Engineers to take AI adoption at Upvest to the next level. This is the role for someone who wants to own our internal AI tooling architecture, MCP servers and gateways, and the enablement of every team across Upvest leveraging AI. If that sounds like you, please reach out.